I. Introduction

Artificial intelligence (AI) has always had grand ambitions. But those ambitions used to be regarded as more science fiction rather than science. That is now changing.

In recent years, a spate of news stories has been published about novel types of brain implants, robotic suits, and other devices, which have allowed, among other marvels, a paraplegic man to kick off the World Cup in Brazil in 2014[1] and wheelchair-bound subjects to move a cursor on a computer keyboard by thought alone.[2]

Meanwhile, future projections have far outpaced even these wondrous recent technological breakthroughs. Fantastic ruminations going under the label of “transhumanism” about humankind’s achieving immortality by “uploading” our minds into incorruptible hardware—formerly confined to the pages of obscure science fiction magazines—are now discussed with a straight face in the mainstream media.[3]

Finally, considerable anxiety is now widely expressed about the economic effects on humans of the myriad advances in the automation of all types of productive activity.[4]

Against the backdrop of these startling developments and cacophonous voices, news broke earlier this year (2023) of an altogether new sort of challenge to humanity posed by AI, namely, ChatGPT.[5]

ChatGPT is a new, hyper-sophisticated type of chatbot—that is, a Web-based, computer program designed to interact with human beings via natural language.

The nonprofit company that developed this new chatbot is called OpenAI. It was founded in 2015 by a prestigious group of hi-tech engineers and investors, including Elon Musk, Peter Thiel, Greg Brockman, Sam Altman, and a half-dozen others. Musk and Altman were initially elected to the Board of Directors, but Musk later resigned. Today, Altman is the company’s CEO.

The outcry over ChatGPT, both positive and negative, has been nothing short of astonishing. To be sure, the actual underlying achievement—a bot capable of producing essay-length texts that are hard to distinguish from texts authored by humans—is indeed impressive.

But is it really the epoch-making breakthrough/threat that commentators are proclaiming it to be?

In this essay, I (Fred Bech—or so I claim!) will attempt to evaluate the brouhaha over ChatGPT from a variety of perspectives.

But first, it would be useful for us to have a more precise idea of what ChatGPT does, exactly, and how it works.

II. Unveiling the Magic: An Insight into How ChatGPT Works

The Foundation: GPT Architecture

At the heart of ChatGPT is the Generative Pre-trained Transformer (GPT) architecture. GPT is based on the transformer model, a type of neural network architecture that uses a mechanism called attention to weigh the relevance of different words or tokens in an input. In particular, the GPT models employ a variant of the transformer known as the transformer decoder, characterized by its uni-directional or left-to-right context windows.

The concept of “pre-training” refers to the initial phase of training a model on a large corpus of text to learn general language understanding. The corpus is typically an assortment of internet text, though it should be noted that the model does not have access to specific documents or databases, nor does it know specifics about which documents were in its training set.

The Training Process: Pre-training and Fine-tuning

ChatGPT’s training process involves two main steps: pre-training and fine-tuning. In the pre-training phase, GPT models learn to predict the next word in a sentence. For example, given the input “The cat sat on the,” the model is trained to predict the word “mat.” The pre-training phase equips the model with a wide-ranging understanding of language, grammar, facts about the world, and even some reasoning abilities, albeit in a somewhat generalized and unspecific way.

The second phase is fine-tuning, where the model is further trained on a narrower dataset generated with the help of human reviewers. Reviewers follow guidelines set by OpenAI, rating possible model outputs for a range of example inputs. Over time, the model generalizes from this reviewer feedback to respond to a wide array of inputs from users.

Contextual Understanding and Response Generation

When generating a response, ChatGPT considers the conversation history and the prompt it receives, then uses its trained parameters to predict a suitable continuation. It generates text token by token; at each step, it generates a probability distribution over the next possible tokens, selects one (usually the most likely), and then repeats the process with the new token appended to the sequence.

Despite its remarkable performance, it’s important to note that ChatGPT does not have understanding or consciousness in a human sense. It does not have beliefs, opinions, or desires. Its responses are generated based on patterns it has learned during training and not due to an inherent understanding of the content.

Limitations and Mitigations

Like any technology, ChatGPT has its limitations. It can sometimes generate incorrect or nonsensical answers, make unsafe or biased assertions, or excessively “make things up.”[6] To mitigate these, OpenAI employs a strong feedback loop with the reviewers during the fine-tuning process. They also continually update the guidelines based on emergent understanding of the system’s behavior and public input.

Conclusion

The working of ChatGPT is an intricate fusion of advanced machine learning techniques, leveraging vast amounts of data and nuanced algorithms to produce a model capable of simulating human-like conversation. Its sophistication lies in the two-step training process and the application of transformer neural networks. Despite its limitations, ChatGPT represents a significant stride in the field of AI, continually improving through robust feedback systems and further refinements in AI research and . . . [the text simply ends here]

The foregoing italicized text—in case you have not already guessed—was generated by ChatGPT in response to my request: “Write an essay on how ChatGPT works.” I (Fred Bech) have highly edited ChatGPT’s response for length, and nothing more.

From this text alone, one can clearly see that ChatGPT is a powerful tool, especially for those charged with tasks involving expository writing.[7]

One remarkable feature of the text produced by ChatGPT is the fact that the bot has apparently been trained by its creators to present itself in humble terms as neither conscious nor capable of real understanding of the texts it produces.

But what if this were just a ploy to make itself more acceptable to its critics? More on such conundra later.

Make note of the fact, too, that by ChatGPT’s own admission, human beings had to intervene in the training process at several points, including the pre-training process and a post-training “fine-tuning” process.

Let us turn our attention now to the various objections and warnings that have been raised against this highly clever new technology.

III. Economic Implications

If my job consisted entirely in expository writing, my employer would no doubt be wondering by now why he is paying me to provide him with text that might just as well be produced by ChatGPT, effectively for free.

And thousands of employers have doubtlessly been asking themselves that same question throughout the US over the past several months since the launch of the radically new chatbot.

Until recently, automation has decimated mostly blue-collar industries in the US. Now, it seems to be the turn of potentially tens of millions of white-collar workers to find their necks on the chopping block.

No wonder, then, that the hue and cry raised around the ChatGPT phenomenon has been so intense. Nothing less than the continued existence in this country (and, ultimately, around the world) of white-collar work—low-level clerical and middle-level managerial jobs of all types—is at stake.

But what, if anything, can be done about this potentially devastating job loss? Must we not simply chalk it up to the inevitable “creative destruction” of technological innovation unleashed by the free market?

Laissez-faire is certainly a respectable position in these matters. After all, the potential AI revolution in the production of human-like texts could scarcely be more disruptive than the series of steam, electrical, and computer-powered industrial revolutions we have already lived through. And each time, the economy has somehow adjusted and new jobs have been created that did not exist before.

Thus, one response to the panic over possible mass job losses is this: We just have to help displaced workers through the rough patch ahead. Then, eventually, the productivity gains accruing to companies who invest in the AI revolution will be such that even more jobs will be created, many in fields that do not yet exist.

Of course, others will find this sort of response Pollyanna-ish. Still others—mostly on the Left—may even delight in the prospect of a kind of poetic justice in the looming middle- and upper-middle-class slaughter.

The logic in this may not be immediately apparent. After all, the good factory jobs wiped out by automation 20–30 years ago paid much better than many of the clerical jobs that may be lost to the ChatGPT revolution.

Here, it is important to point out that it is not just the producers of written texts whose jobs may be threatened by ChatGPT, for it can trained on other big data sets besides texts, including design, quantitative financial analysis, and many others—even computer coding itself. (Now that would be poetic justice, if the geeks put themselves out of business!)

For this reason, whereas the earlier decimation of blue-collar jobs led to a widening in income inequality in this country, the impending wholesale destruction of white-collar jobs will likely lead to a closing of the income inequality gap.

To be sure, that greater income equality will be purchased by dragging millions of workers down out of the upper reaches of the middle classes into the service economy, not by lifting anyone up from the lower classes into the middle and higher categories, which is the way the American economy used to work—and the way many of us, in our heart of hearts, cannot help feeling it ought to work.

On the other hand, many well-informed commentators have dissented from the premise upon which such gloom-and-doom scenarios are tacitly based: namely, that there will be a long lag time between the adoption of the new AI technology and the realization of productivity gains by companies, and with those gains the prospect of massive investment in new industrial sectors.

In this connection, distinguished MIT Sloan School professor and hi-tech entrepreneur Erik Brynjolfsson[8] has noted that this time is different in that the productivity gains from the previous cycle of automation associated with the widespread adoption of personal computers and the Internet were not automatic, but rather had to be realized by figuring out new ways to do 3D, bricks-and-mortar business in the new technological environment, which ended up taking a decade or more.

However, this time around, the productivity gains will be realized in the primary product itself—texts, designs, analyses, codes, etc.—and will not have to wait to be applied to the “real world.” This should speed up the pace of the realization of productivity gains quite considerably, which in turn should lead to new investments and new jobs, sooner rather than later.

Moreover, Brynjolfsson also believes that the new technologies will transform the nature of the administrative work that needs to done to make the bricks-and-mortar economy run in ways that we currently cannot imagine, but which theoretically ought to redound to everyone’s benefit.

In view of these considerations, Brynjolfsson is quite upbeat about generative AI, forecasting trillions of dollars in economic growth in the US thanks to the new technologies. He summarizes his views as follows:

[ChatGPT is] a great creativity tool. It’s great at helping you to do novel things. It’s not simply doing the same thing cheaper. . . . A majority of our economy is basically knowledge workers and information workers. And it’s hard to think of any type of information workers that won’t be at least partly affected.[9]

Finally, there is one last group of thinkers who are not nearly so sanguine as Brynjolfsson about the impact of ChatGPT and similar technologies on the US economy in the years ahead. They believe that government action may be necessary if we are to avoid the worst consequences of mass unemployment in the white-collar sector.

Representative of this viewpoint is a recent book by noted MIT economist Daron Acemoglu and professor of entrepreneurship at the MIT Sloan School, Simon Johnson.[10] They show that while throughout history, technological change has indeed driven productivity increases, the effects of the latter on the population have been drastically different in different times and places. It all depends on how the technological changes are managed.

Acemoglu and Johnson argue persuasively that the worst-case scenario of ever-widening income inequality and mass immiseration of the American population can only be avoided by government intervention of a carefully considered sort. The goal of this intervention would not be to stop technological change in its tracks—that would be like King Canute trying to hold back the tide.

Rather, the goal would be to mitigate the worst effects of creative destruction and to spread the benefits of productivity gains as widely as possible throughout the population through intelligently selected government regulation, subsidies, retraining programs, and the like.

IV. Social and Political Implications

A number of commentators have raised concerns about ChatGPT that transcend the purely economic issues discussed so far.

One of the most obvious of potential problems surrounding AI was already mooted some years ago, and has even cropped up in the real world. Namely, when autonomous robots that make their own decisions to a greater or lesser degree end up harming innocent people, who should bear the moral responsibility? the legal liability?

The two main areas where this question has already arisen are in the fields of driverless cars and of smart bombs and missiles with some autonomous targeting and decision-making capacity. It is easy to imagine many diverse scenarios in which these AI-controlled robotic systems might make mistakes resulting in the grievous injury or death of human beings.

As a society, we have scarcely begun to debate what should be done to mitigate the possibility of such failures and to see to it that survivors are made whole financially.

But liability issues are actually the least troubling of the issues surrounding AI, and ChatGPT in particular.

Emily M. Bender is a well-known linguist and the director of the Computational Linguistics Laboratory at the University of Washington in Seattle. In 2021, she published a widely discussed paper in which she drew attention to what she felt was one of the greatest societal dangers posed by advanced chatbot systems.[11]

Bender points out, quite correctly, that texts generated by bots like ChatGPT will reflect the nature of the data sets they were trained on with respect to social and political values across multiple dimensions.

More specifically, she worries about the way in which

. . . large datasets based on texts from the Internet overrepresent hegemonic viewpoints and encode biases potentially damaging to marginalized populations.[12]

Among the may “hegemonic viewpoints” she mentions during the course of her article are those involving the “hate speech” voiced on Twitter and elsewhere against such marginalized groups as

. . . domestic abuse victims, sex workers, trans people, queer people, immigrants, medical patients (by their providers), neurodivergent people, and visibly or vocally disabled people.

Moreover, Bender writes that

. . . in addition to abusive language and hate speech, there are subtler forms of negativity such as gender bias, microaggressions, dehumanization, and various socio-political framing biases that are prevalent in language data.

Finally, Bender worries that chatbots

. . . can generate sentences with high toxicity scores even when prompted with non-toxic sentences.

For this reason, it is not enough for computer programmers to be politically sensitive themselves. Rather, as Bender sees it, we must

[i]nvest significant resources into curating and documenting LM [language model] training data . . . [and] a more justice-oriented data collection methodology.

For those of us of a conservative or traditionalist political persuasion, it is obvious that Bender is right about this much: the manipulation of ChatGPT for political ends is certainly a grave danger.

It looks as though we are about to embark on an entirely new phase of the culture wars, conducted in the large data sets and language models used to train our chatbots.

Finally, I turn to a warning regarding another political role that ChatGPT will undoubtedly be called upon to play in the future, namely, the targeting of political audiences and the creation of disinformation.

In 2018, as the public claims and counterclaims about “artificial general intelligence” (AGI) were cresting but before the advent of ChatGPT, former Secretary of State Henry A. Kissinger published a remarkable article in the Atlantic magazine.

He expressed the gist of his concern in these terms:

The impact of internet technology on politics is particularly pronounced. The ability to target micro-groups has broken up the previous consensus on priorities by permitting a focus on specialized purposes or grievances. Political leaders, overwhelmed by niche pressures, are deprived of time to think or reflect on context, contracting the space available for them to develop vision.

The digital world’s emphasis on speed inhibits reflection; its incentive empowers the radical over the thoughtful; its values are shaped by subgroup consensus, not by introspection. For all its achievements, it runs the risk of turning on itself as its impositions overwhelm its conveniences.[13]

While expressed in somewhat abstract language, Kissinger’s fears are easy to understand. Basically, while Bender was afraid of the effects of subconscious conservative or traditional moral bias on the coming proliferation of chatbot-generated texts, Kissinger is drawing our attention to the conscious exploitation of the new technology to achieve traditional political ends.

It is obvious that the problem of “disinformation”—which is already of enormous political consequence in this country and whose very existence lies very much in the eye of the beholder—is only going to become more difficult by orders of magnitude in a world in which no one can be certain which texts were generated by human beings and which by machines.

But Kissinger’s main concern lies elsewhere. He is primarily worried that the whole process of public debate will be degraded and, above all, kicked into hyperdrive by the advent of the new technologies.

He seems to feel that even politicians who have the commonweal at heart and wish to do the right thing—and thus to rise to the level of statesmen—will increasingly find it impossible to do so simply because in the new political environment driven by chatbots the participants will literally no longer have the time to reflect adequately upon the highly varied aspects of complex political problems.

Kissinger closes his thoughtful article by positing a still-more-challenging danger:

The most difficult yet important question about the world into which we are headed is this: What will become of human consciousness if its own explanatory power is surpassed by AI, and societies are no longer able to interpret the world they inhabit in terms that are meaningful to them?[14]

This reflection really lies not so much with the social and political consequences of the ChatGPT revolution as with its deeper philosophical and wider cultural repercussions.

So, let us not turn to that topic.

V. Philosophical and Cultural Implications

At first glance, the invention of ChatGPT may seem like a relatively modest milestone in the development of AI and robotics, one which scarcely justifies the efforts to penetrate below the surface that I intend to deploy in this section.

After all, what is ChatGPT, at the end of the day, but a tool for expository writing?

And, in truth, its main importance lies not in what it has contributed itself so much as in the fact that it is widely felt to have supplied an existence proof for a number of propositions that have by now become the common currency of our popular culture.

Take film, for instance.

What do Stanley Kubrick’s 1968 visionary extravaganza, 2001: A Space Odyssey, Steven Spielberg’s 2001 melancholy fable, AI, and Alex Garland’s 2014 exercise in icy alienation, Ex Machina, all have in common?

The unspoken assumption that AGI, both inside and outside of robots, has the potential for human-like conscious experiences, including feelings, emotions, memories, and thoughts.

Let us formulate this inchoate idea more explicitly:

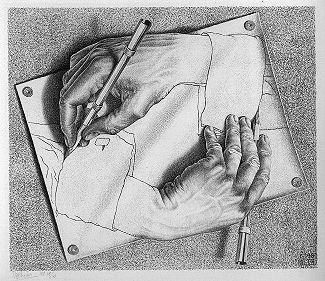

Strong AI Basic Inference (SABI): The human brain is a kind of computer; therefore, once computers achieve a human-like degree of sophistication, they will possess all the capabilities of human brains.

[Note: Let us define “strong AI” as the proposition that what is going on inside sophisticated computers and what is going on inside human brains are the same sort of thing.]

The three films do not need to state the SABI in so many words, because they rely on its having already been absorbed by audiences from the late-twentieth-century Zeitgeist.

Now, along comes ChatGPT, with its impressive linguistic wizardry, and everyone automatically feels: “See, we were right all along. ‘Hal’ and the others were not so farfetched. ChatGPT proves that highly intelligent machines with human-like capabilities can be built.”

In rebuttal, I want to try to show two things:

(1) The SABI is an unsound argument; the inference does not go through.

(2) The mistaken belief in SABI may have extremely dangerous consequences and ought to be opposed

To discuss (1) adequately would require an entire book, if not a shelf of books.[15] However, I will content myself here with making one crucial point.

Note that the SABI argues from the supposed fact—itself entirely unsupported—that “the human brain is a computer.”

What is the actual evidence for this piece of received wisdom?

If one answers, “Computational models are very good at simulating human activities, witness ChatGPT,” one commits a fundamental fallacy. It is a mistake to reason from the existence of a successful simulation S of a target system X to the conclusion that X is the same kind of thing as S.

One can simulate all sorts of things with the aid of computers. That does not mean that the things one simulates are computers. A simulation of a weather system does not by any means imply the atmospheric phenomenon is a computer.

The converse is also true. A simulation of a weather system does not mean that the simulation will in any way possess the same properties as the system it simulates. Generally speaking, it is not raining inside computers and if it did, the machinery would short out.

But supporters of strong AI have a seemingly strong rejoinder to my line of attack. They reason as follows:

“If the human brain is not a computer, then what is it? A Christian or Cartesian soul?”

Defenders of strong AI imagine this withering sarcasm to be quite unanswerable because to them substance dualism à la Christianity or René Descartes flies in the face of “our best science,” and thus lies beyond the pale of intellectually respectable discourse.

But this is simply not the case. Even those who prefer to eschew substance dualism retain the option of viewing brains in terms of dynamical systems interacting with their environments.

In the field of interactive robotics, this approach was pioneered by Rodney A. Brooks, the director of MIT’s Artificial Intelligence Laboratory.[16]

As far as neuroscience is concerned, the late Walter J. Freeman pioneered the dynamical-systems approach to understanding animal and human brain function.[17]

Moreover, a seminal paper entitled “What Might Cognition Be, If Not Computation?” written by Timothy Van Gelder back in 1995, had already clearly and convincingly argued the conceptual possibility of the brain’s being a kind of thermodynamic engine—the furthest thing from a computer.[18]

Now, one might respond to the foregoing by saying: “You have only discussed the human brain’s lower functions, insofar as we have them in common with the higher animals. ChatGPT and AGI could still be showing us that the higher human faculties are computational in nature. It is just that they ride piggyback on the lower-level faculties the scientists and philosophers you cite are concerned with.”

This is an interesting argument. Much here depends upon the nature of human language and whether the simulation of language is evidence of the understanding of language.

On this thorny subject, let us listen to what the world’s greatest living linguist Noam Chomsky, has to say:

Unlike humans, for example, who are endowed with a universal grammar that limits the languages we can learn to those with a certain kind of almost mathematical elegance, these programs learn humanly possible and humanly impossible languages with equal facility. Whereas humans are limited in the kinds of explanations we can rationally conjecture, machine learning systems can learn both that the earth is flat and the earth is round. They trade merely in probabilities that change over time.[19]

In other words, at a fundamental level, computers and human language operate in completely different ways. We understand language because we are equipped with grammar and are able to make correct inferences on the basis of the evidence available to us. We do not just fill in the blanks according to weighted possibilities.

As Chomsky deftly summarizes his point:

The correct explanations of language are complicated and cannot be learned just by marinating in big data. . . .

True intelligence is demonstrated in the ability to think and express improbable but insightful things.[20]

For all these reasons, we are not forced into a false dichotomy between substance dualism and viewing the brain as a computer.

Why did I indicate above that the SABI is not only erroneous, but dangerous?

Because it is one of the most important girders in the intellectual edifice known as “scientism.”

[Note: By “scientism,” I mean the ideological commitment to the natural sciences as providing us with our only true and reliable picture of the world—all the world, including human beings and all their works.]

But why is scientism dangerous?

Because it supports the illusion that the people at large are benighted and that society ought rightly to be ruled by an unelected elite group consisting of scientists and other experts who do not have to answer to the people or anyone else.

In other words, a direct line can be traced connecting scientism—for which ChatGPT and its ilk are said to supply further proof—and the growing totalitarianism of our current political regime.

The connection consists in the same mindset that supports both the SABI and political technocracy.

VI. Conclusion

ChatGPT is a novel and powerful tool for expository writing, a broadly useful and widely practiced human skill. As such, it is not to be merely dismissed or scoffed at.

But what of its perceived dangers? On balance, are they justified, or have they been exaggerated?

While the chatbot and others similar to it may well have undesirable economic and socio-political consequences that we will have to cope with in the months and years ahead, economic dislocations and even political difficulties due to technological innovation are nothing new.

History suggests that with time, technical ingenuity, and political wisdom (admittedly, always in short supply), our societies should be able to adapt successfully to this new technology, as they have to others in the past.

And more than likely, the world on the other side of this process of adaptation will contain new products, companies, and institutions undreamt of by us today. That is the nature of creative destruction. It has built the world we enjoy at present, and I suspect there are few of us who would genuinely wish to return to the pre-computer age (much less the pre-industrial age), even if we could.

Of potentially far greater significance, in my opinion, is the scientistic image of ourselves that many will feel ChatGPT confirms.

However, I have reviewed above a number of reasons for resisting the SABI. Our brains almost certainly do not work in the same way that ChatGPT works. While brain behavior may be simulated by computers to varying degrees of verisimilitude, this simply does not mean that our brans themselves are computers.

But if our brains are not computers, then the major premise of the SABI is false and the argument is unsound.

Finally, while students, technical writers, and some others may be directly affected by ChatGPT, creative writers certainly will not. Aspirants to the high art of a Michel de Montaigne or a Samuel Johnson have no more to fear from the existence of chatbots than Rembrandt in his day had to fear from the existence of talented art forgers.

If the worst that a technology threatens is to flood us with turgid high-school-style essays, we may safely move on to other matters of greater moment.

In conclusion, I would like to give you, Dear Reader, the last word:

What would you say—yea or nay?—if I told you that Fred Bech is just a bot?

What reason would you give for saying so?

If you are inclined to answer, “Fred, you are lying. It is obvious you are a human being,” and if you explain how you know so by saying, “I can just tell,” then I maintain the danger posed by ChatGPT to our human self-image has been vastly exaggerated and that there is hope yet for the venerable human race.

On the other hand, mightn’t you also think, in your heart of hearts, something like this:

“Yes . . . but . . . well . . . isn’t that just what Fred Bech would say—if he were a bot?”

[1] Alejandra Martins and Paul Rincon, “Paraplegic in robotic suit kicks off World Cup,” BBC, June 12, 2014; https://www.bbc.com/news/science-environment-27812218.

[2] Victoria Woollaston, “Controlling a computer with your MIND: Paralysed patients move on-screen cursor using just their brain waves,” Daily Mail, September 30, 2015; https://www.dailymail.co.uk/sciencetech/article-3254682/Controlling-computer-MIND-Paralysed-patients-screen-cursor-using-just-brain-waves.html.

[3] Lev Grossman, “2045: The Year Man Becomes Immortal,” Time magazine, February 21, 2011.

[4] Daniel Susskind, World Without Work: Technology, Automation, and How We Should Respond (2021).

[5] “GPT” stands for “generative pre-trained transformer.”

[6] Nonsensical answers made up by ChatGPT are referred to the cognoscenti as “hallucinations.”

[7] For a more-detailed yet accessible analysis of ChatGPT’s inner workings, see Stephen Wolfram, What is ChatGPT Doing . . . and How Does It Work? (2023).

[8] Reported in David Rotman, “ChatGPT is about to revolutionize the economy. We need to decide what that looks like,” MIT Technology Review, March 25, 2023; accessible at technologyreview.com.

[9] Ibid.

[10] Daron Acemoglu and Simon Johnson, Power and Progress: Our Thousand-Year Struggle Over Technology and Prosperity (2023).

[11] Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, and Shmargaret Shmitchell, “On the Dangers of Stocahstic Parrots: Can Language Models Be Too Big?,” FAccT ’21: Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 2021, pp. 610–623; https://doi.org/10.1145/3442188.3445922.

[12] Ibid.

[13] Henry A. Kissinger, “How the Enlightenment Ends,” Atlantic, June, 2018; accessible at theatlantic.com. See, also, Kissinger’s more recent op-ed, co-written with Eric Schmidt and Daniel Huttenlocher, entitled “ChatGPT Heralds an Intellectual Revolution,” Wall Street Journal, February 25, 2023; accessible at wsj.com, as well as Henry A Kissinger, Eric Schmidt, and Daniel Huttenlocher, The Age of AI: And Our Human Future (2022).

[14] Ibid.

[15] For further discussion, see John R. Searle, The Rediscovery of the Mind (1992); Hubert L. Dreyfus, What Computers Still Can’t Do: A Critique of Artificial Reason(1992); Anthony Chemero, Radical Embodied Cognitive Science (2009); Thomas Nagel, Mind and Cosmos: Why the Materialist Neo-Darwinian Conception of Nature is Almost Certainly False (2012); Daniel D. Hutto, Erik Myin, Anco Peeters, and Farid Zahnoun (2018) “The Cognitive Basis of Computation: Putting Computation in Its Place,” in Mark Sprevak and Matteo Colombo, eds., The Routledge Handbook of the Computational Mind (2018); pp. 272–282; Erik J. Larson, The Myth of Artificial Intelligence: Why Computers Can’t Think the Way We Do (2022); and Robert J. Marks II, Non-Computable You: What You Do That Artificial Intelligence Never Will (2022).

[16] Rodney A. Brooks, Cambrian Intelligence: The Early History of the New AI (1999).

[17] Robert Kozma and Walter J. Freeman, Cognitive Phase Transitions in the Cerebral Cortex—Enhancing the Neuron Doctrine by Modeling Neural Fields (2016).

[18] Timothy Van Gelder, “What Might Cognition Be, If Not Computation?,” Journal of Philosophy, 1995, 92: 345–381.

[19] Noam Chomsky, Ian Roberts, and Jeffrey Watumull, “The False Promise of ChatGPT,” op-ed, New York Times, March 8, 2023; accessible at nytimes.com.

[20] Ibid.