Review of Shoshana Zuboff’s The Age of Surveillance Capitalism

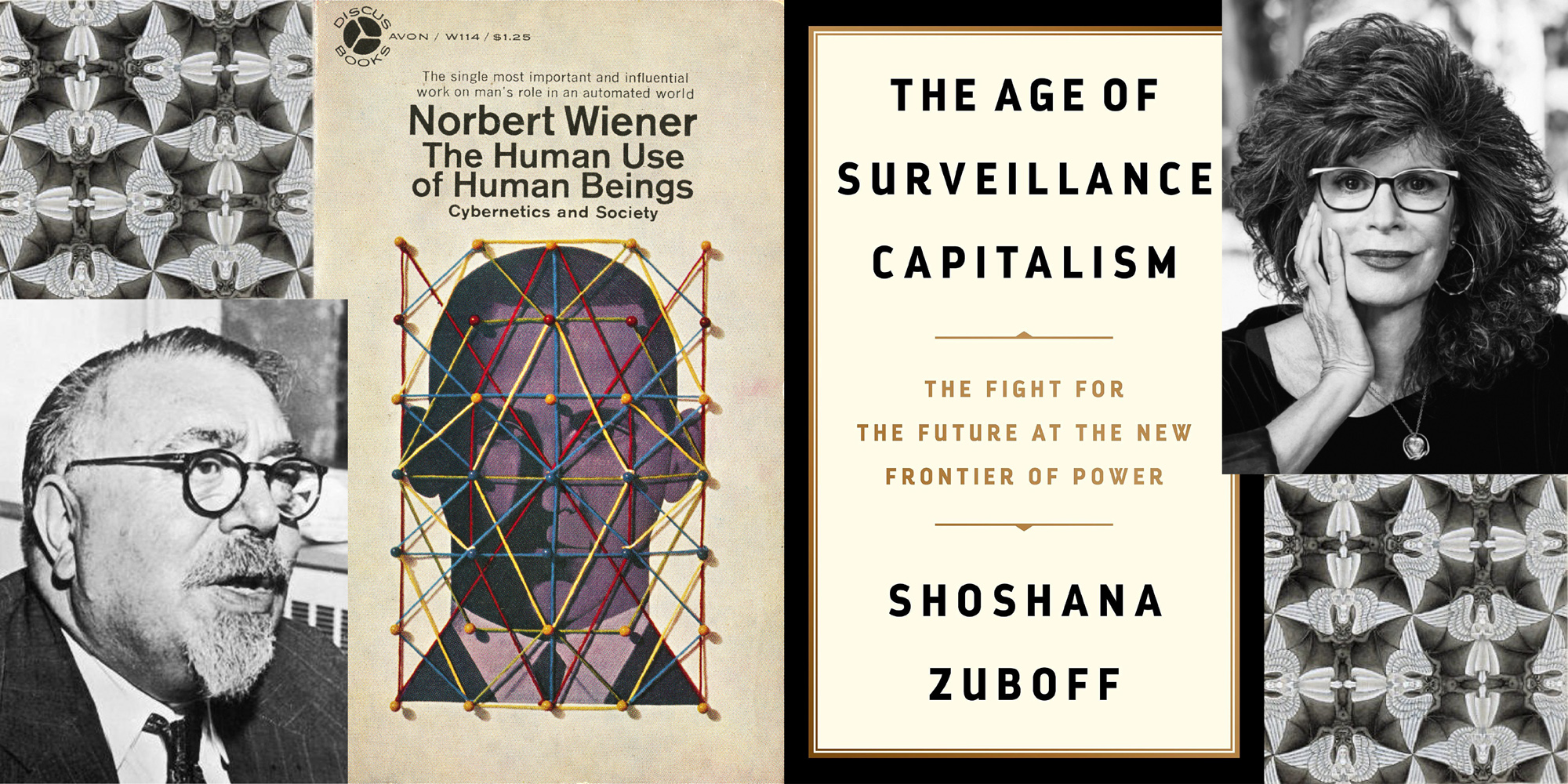

In setting the stage for this review of Shoshana Zuboff’s remarkable book, a brief selective history of the field of artificial intelligence will be helpful, especially in recalling the pioneering work of Norbert Wiener. “Artificial intelligence” (AI) is in common usage today, but the term is only a few decades old. It was introduced in the 1950s and it entered the lexicon as part of a rebranding effort of another word, now mostly forgotten: cybernetics. The polymath Norbert Wiener coined cybernetics to refer to feedback loops that appear in biological and mechanical systems when they adjust behavior to input. Humans do this, argued Wiener, at the individual level (in our brains and bodies) and at societal scale, in culture writ large.

Cybernetics à la Wiener, the mathematician who worked on automatic piloting and navigational systems as part of the effort to defeat the Nazis in World War II, describes ubiquitous feedback systems that can be understood mathematically. We have largely forgotten Wiener’s great contribution to the computer age, even as artificial intelligence has proliferated and become commonplace. AI was coined by the late John McCarthy, another mathematician turned computer scientist, at the dawn of AI in 1956, the genesis of the official effort to program computers to exhibit human-like intelligence. McCarthy disliked Wiener; at any rate he wished to rebrand the fledgling field of computer research and development in terms that would be marketing friendly and evocative of future possibility.

The terms here matter. Wiener was fascinated by the potential of computers to imbue the world with new forms of control, and his cybernetics movement was largely focused on how new technologies interact with humans to enable new forms of behavior. What he downplayed, the “internal” or individual intelligence of such technologies, McCarthy and the new era of AI scientists made central. Wiener was prescient enough to extend his thinking about mathematical and computational systems of control to broader issues of humanity, writing in the 1950s a largely forgotten but strikingly relevant book of warning about the age of technology then just beginning. The Human Use of Human Beings is an extended meditation on new forms of control made possible by the development of advancing technologies. Writing at the apex of the Cold War, his words are colored by fears of rogue nations obliterating the world with nuclear weapons (still with us), and new forms of totalitarian oppression put on steroids by computational systems in the hands of bad actors (also still with us). We moderns can recognize the wisdom in his early alarm, but the AI revolution that unfolded in the last seventy years has subtly shifted our attention from control to intelligence.

Fast forward to today, and we’re living in the AI world, the one crafted by McCarthy (and even earlier, by Alan Turing) and company. We are now awash in “smart technology” and “deep learning”—terms that prop the interpretation of AI not as a technology of control but of increasing intelligence. Predictably, we are also living in a world seemingly slow to recognize the downside of AI viewed as control-enabling technology, put to purposes by the human inventors and owners of large systems. The AI legacy of McCarthy gave birth to our current obsession with seeing machines as competing for the role of most intelligent with ourselves. Philosophers like Oxford’s Nick Bostrom capture the AI imagination (and display its blind spots) with talk of a coming superintelligence, made possible by the continued march of AI towards greater and greater intelligence.

Harvard professor of business Shoshana Zuboff’s bestseller The Age of Surveillance Capitalism is a kind of reincarnation of Wiener’s focus on computation as control—and it couldn’t come at a better time, as worries about Big Tech, companies like Google, Facebook, Amazon, Microsoft and Apple, increasingly make news for a grab bag of broadly anti-human and anti-democratic violations. Antitrust legislation is due to be introduced in the Senate on the heals of committee hearings calling to account Mark Zuckerberg of Facebook and other heavyweights of Big Tech, like Jeff Bezos at Amazon and Larry Page and Sergey Brin at mega-tech giant Google. Privacy issues as well as complaints about information monopolies and even mental health worries have all peppered the landscape of once lionized Silicon Valley companies who seemingly turned lead into gold, remaking our world with vast services and capabilities unthinkable even two decades ago. But Zuboff has performed perhaps the most valuable service of all, as her latest book takes the often disparate and inchoate feelings that Big Tech has run amuck and pulls them together into a framework that gets at the nub of the problem for us.

It’s surveillance, as The Age of Surveillance Capitalism tells us, but the best way to read Zuboff is as a continuation of Wiener’s early warnings that computational systems are best viewed as new forms of control. It works like this. Once upon a time, tech companies like Google realized that gathering information about users of their systems facilitated improved user experience. In the case of Google, the more we give Google access to our information—in prior keyword searches, clickstreams, and a host of other seemingly private information like our birthdates, shopping habits, and even physical location (tracked by apps on our smart phones)—the more relevant future searches on Google will be for us.

The more the system knows, the more benefit we get. This is an immediate value-add for the now billions of people using Google, and it represents a genuine business insight that Google understandably lauds as benefit passed along to its user base. But there’s another gambit here—another insight—which followed quickly on the heels of the first (typically called “personalization”). Fairly quickly, the value-add for users translating directly into better search results taps out, and our data exhaust, as it’s called, yields a surplus of private data about us that isn’t directly useful for improving our search results. It’s a new form of surplus, a behavioral surplus, as Zuboff calls it, and the discovery of this surplus ushered in the modern Big Tech era, and by degrees pulled the modern world into an entirely new and virulent form of profit-making.

Page and Brin (founders of Google) realized by the early 2000s that the very same mechanism that improved user results by capturing private data about users in data “exhaust” also made possible the targeting of ads to users that could pay back anxious early-stage investors and turn profits for the company. Google, now famously, issued a patent in 2006 for AI based methods (called in yet more intelligence-friendly terms “machine learning”) that capture the wealth of private user data generated by us online to, in effect, predict our future behavior. The predictions translate into profits when tied to advertising clicks. Thus the age of the click-through rates emerged on the tail of the initially salutary motive to personalize online services. Click-throughs are big money, as Google and its investors soon discovered. And, subtly yet importantly, the AI algorithms powering Google turned toward the control paradigm that Wiener warned us might be the true legacy of the new science.

Zuboff’s book is a masterpiece at framing the issue of AI—though her discussion of AI is derivative from her discussion of the Big Tech companies that employ it—as a form of control that tends to concentrate power in the hands of the owners of the systems—not the end users. What results is an Orwellian picture of modern life sans totalitarianism. Anti-democratic countries like China might celebrate expansion of surveillance in top-down fashion by Big Brother government, but Silicon Valley doesn’t care about owning your soul or policing your thoughts. Your behavior online suffices. As Zuboff points out, Big Tech doesn’t care what you say or do in the end (though, notoriously, it sometimes seemingly does, as with recent actions taken by Amazon, Facebook, and Twitter to limit certain types of political content). It simply needs to know more and more about you—where you go, what you eat, who you date, what you say in text messages, and of course what you search for online. That surplus is the key to their profits, and so is understandably guarded and defended in spite of mounting evidence that this newly discovered form of capitalism is virulent.

Zuboff introduces another concept in The Age of Surveillance Capitalism that goes a long way to unpacking what might otherwise seem an academic or nitpicking worry about services like Google and Facebook, which admittedly have been embraced and enjoyed by millions. For, the result of zealously pursuing any and all means to capturing our behavioral surplus for purposes of predicting our future behavior (to sell ads, remember) is an information asymmetry that forms between the big tech owners and the rest of us. Whereas the industrial revolution created among its consequences a division of labor, resulting in well-known asymmetries of power between workers and owners, the new information revolution and, in particular, the focus of surveillance capitalism on knowledge collection create a division of learning, according to Zuboff. Big Tech owns the server farms making the modern web possible; it also knows a lot about you without you in turn knowing much about them—what they know about you, how they know it, and how it’s all to be used. She invokes early sociologist Emil Durkheim’s memorable warning about capitalism generally tending toward “extreme asymmetries of power,” only now in the creepy guise as a disparity in who gets to know.

Her coinage of terms here amounts to a deep dive. The book puts forward not just a pointed criticism and warning of the power of Big tech in our lives, but it also ambitiously and effectively erects a kind of framework within which readers can both see the problems at root and articulate them to others. The full sweep of Surveillance is vast, pulling into its orbit a historical treatment of capitalism that also illuminates cultural and social changes over the last two centuries. Our grandparents, for instance, or our distant relatives, embarked on the dizzying journey of migration to cities—looking for jobs—and kicked off what she calls the First Modernity. Changes here made the rise of the modern corporation possible, and they also shifted social dynamics toward a new appreciation of the individual, apart from traditional social groups and mores. Yet by and large First Modernity factory (then office) workers retained much of the emotional and intellectual connection to preexisting social roles and roots. The village itself may have been dismantled from migration into the city, but First Modernity individuals largely took the beliefs and practices with them. The challenge was still, we might say, extrinsic to the person.

The rise of the web and our new lives online have resulted in more radical and perhaps troubling changes. Second Modernity is marked by the loss of traditions entirely, where this new invention—the individual—becomes the locus of everything. In this more radical miasma of possibility, the internet, according to Zuboff, quickly became a tool to craft an identity. This required, among other things, an increasing openness to live and become who we are online. It’s no wonder that Durkheim’s radical asymmetries of power gets cashed out in Big Tech as a deep asymmetry in both knowledge and power. For, as our very lives are lived online in this fundamental Second Modernity way, we are vulnerable like never before to what lies on the other side of what amounts to a one-way mirror deliberately constructed by Big Tech. And here the book certainly delivers for thoughtful readers. It is in Zuboff’s sweeping yet still detail-driven stitching together of a historical narrative tracing the rise of new forms of capitalism made possible by new forms of technology that it’s possible to see the full threat of Big Tech as a natural but virulent form of capitalism.

To be sure, the Second Modernity seems worrisome and difficult even without Big Tech. Readers can be forgiven for contextualizing Zuboff’s account of the rise of the individual, and in other sections her explanation of the modern corporation as a kind of triumph of neoliberal thinking—the corporation as autonomous entity, making attempts to curtail its innovations for profit-seeking difficult. What makes Surveillance Capitalism downright spooky, however, is the case she makes for the unprecedented nature of Big Tech. Again, her concepts within the overall framework of critique are telling, and effective. Take the “two-texts” problem.

Most of us are aware that modern artificial intelligence algorithms run in the background on popular platforms like Facebook and Twitter, as well as Google, Amazon, Netflix and the rest. The AI here is a kind of giant sieve, which takes as input our clickstreams and helps to personalize content for us as we interact with those platforms. There is a positive effect here, as the system in effect learns what we like, so our future experience gets tailored to our preferences. But “preference capture” here leads a double-life. Along with the public text we generate with tweets, searches, Facebook and Instagram posts and the rest, Zuboff explains that the systems are also silently building what she calls a “shadow text,” behind the scenes. We don’t have access to this shadow text (try asking Facebook for a printout of everything they know about you), and so it ineluctably results in an asymmetry in power. As she points out, too, the shadow text consists of—and has, for years—an overabundance of information about us that far supersedes its original justification as a provider of preference prediction. It’s a “behavioral surplus,” as Zuboff terms it; an over-and-above text that’s about you, but not for you. That shadow text is used, as you might guess, to make predictions about your future behavior online.

Shadow texts used by seemingly smart but mindless algorithms under the control of billionaires who aren’t accountable for its use seem pretty suspicious. Yet here again there is a kind of just-doing-our-due-diligence response readily available from Big Tech owners, as their knowledge about your day-to-day life it used to predict what ads to show you. Ads of course are old hat to capitalism. The real trouble starts when we realize that our (unavailable to us) shadow text about us is used not only to predict our future behavior for purposes of ad placement, but increasingly to nudge and manipulate our future choices so as further to deliver juicy adverts. The slippery move from prediction markets (as Zuboff calls them) to behavior modification follows from the inevitable logic of the AI: the more it knows about us, the better it can figure out what we will do in the future. The more that becomes predictable, the further guaranteed outcomes—the Holy Grail of advertisers—become possible by reducing still further the chances of irrelevant placement. If Google can get you to click on something, it hardly need concern itself with merely predicting your future behavior as a free-thinking person. Why? Because, in an important sense, you’ve ceded some of that freedom yourself.

The problem here comes to this: Who has the right to the future tense (another of Zuboff’s portentous phrases)? What if the type of capitalism embraced by Big Tech is really a pursuit of profit at the expense of ourselves, of our own individual right to determine the future tense of our lives? What if profiteering online has by seemingly innocent steps still landed us in the creepy goo of Skinner boxes and perpetual stimulus-response behavior modification? If so—and it does seem to be so—then by any reasonable standard we’ve all wandered whistling into a morass of depersonalizing techniques that in any other context we’d deem dystopian and unacceptable. To Zuboff, that is in fact our current situation. What’s really scary reading Surveillance Capitalism is, as we absorb her argument and reflect on our own lives online, how Big Tech’s mastery of profit by behavior modification is too clearly today’s reality. Who has a right to the future tense, in a democratic and free society? We do. All of us. Zuboff, in the end, makes a convincing case that our freedom is under attack by Big Tech. That’s unacceptable.

What then do we do? This call to arms question was, really, the same one Wiener asked in the 1940s, worried then about new forms of control rather than dazzling computational “intelligence.” His cautionary tone echoes perfectly through the decades, and now seems particularly prescient. The Age of Surveillance Capitalism fits squarely in this tradition, and represents a new and significant cautionary note not about dazzling intelligence displayed by machines, but rather about the means to control and shape our lives against our best interests by mindless systems of control owned and operated for profit. That’s a peril, to be sure. And one that will no doubt persist until we wake up to the new reality we all now live in.

***

Erik J. Larson is a philosopher, computer scientist, and tech entrepreneur. Founder of two DARPA-funded AI startups, he currently works on the underpinnings of natural language processing and machine learning. He has written for professional and popular journals (such as The Atlantic) and has probed the limits of current AI technologies (such as with the IC2 tech incubator at the University of Texas at Austin). In April 2021 he published with Harvard University Press The Myth of Artificial Intelligence: Why Computers Can’t Think the Way We Do (available for purchase on Amazon here).